FireFlink AI Chat Bot

Bridging the gap between manual intent and automated execution — transforming plain-English prompts into executable test suites.

Role

UI / UX Design

Timeline

Nov 2024

Tools

Figma, FigJam

Platform

Web

01

Context

The evolution of efficiency

FireFlink originally pioneered test automation using a robust library of predefined NLP steps — allowing users to "write" scripts by selecting functional English phrases. It was a powerful system. But as test suites grew in complexity, manually assembling these steps became a new bottleneck.

Even with NLP steps available, QA engineers were still spending too much time searching, mapping, and assembling — step by step.

- Discovery Fatigue — Searching through thousands of predefined NLP steps to find the right one was time-consuming

- Logical Mapping — Testers still had to manually define the sequence of automation logic

- Scalability — Building a 50-step test case one step at a time was too slow for rapid release cycles

02

My Contribution

What I worked on

I designed the AI Chatbot interface — an intelligent layer built on top of the existing NLP engine. The goal was to move the user from "Step Builder" to "Intent Architect" describe what you want to test, and let the AI figure out the steps.

03

The Shift

From "Search & Select" to "Prompt & Generate"

The core innovation wasn't just adding a chat box — it was leveraging AI to navigate the existing automation architecture on behalf of the user. The interface had to make this shift feel natural and trustworthy.

| Feature | Legacy NLP Tool | FireFlink AI |

|---|---|---|

| Input Method | Searching for specific NLP phrases | Describing a full user journey in plain English |

| Effort | Manual step-by-step assembly | Instant generation of entire test flows |

| Logic | User defines the sequence | AI interprets intent and maps to the correct steps |

04

The Flow

How the co-pilot works

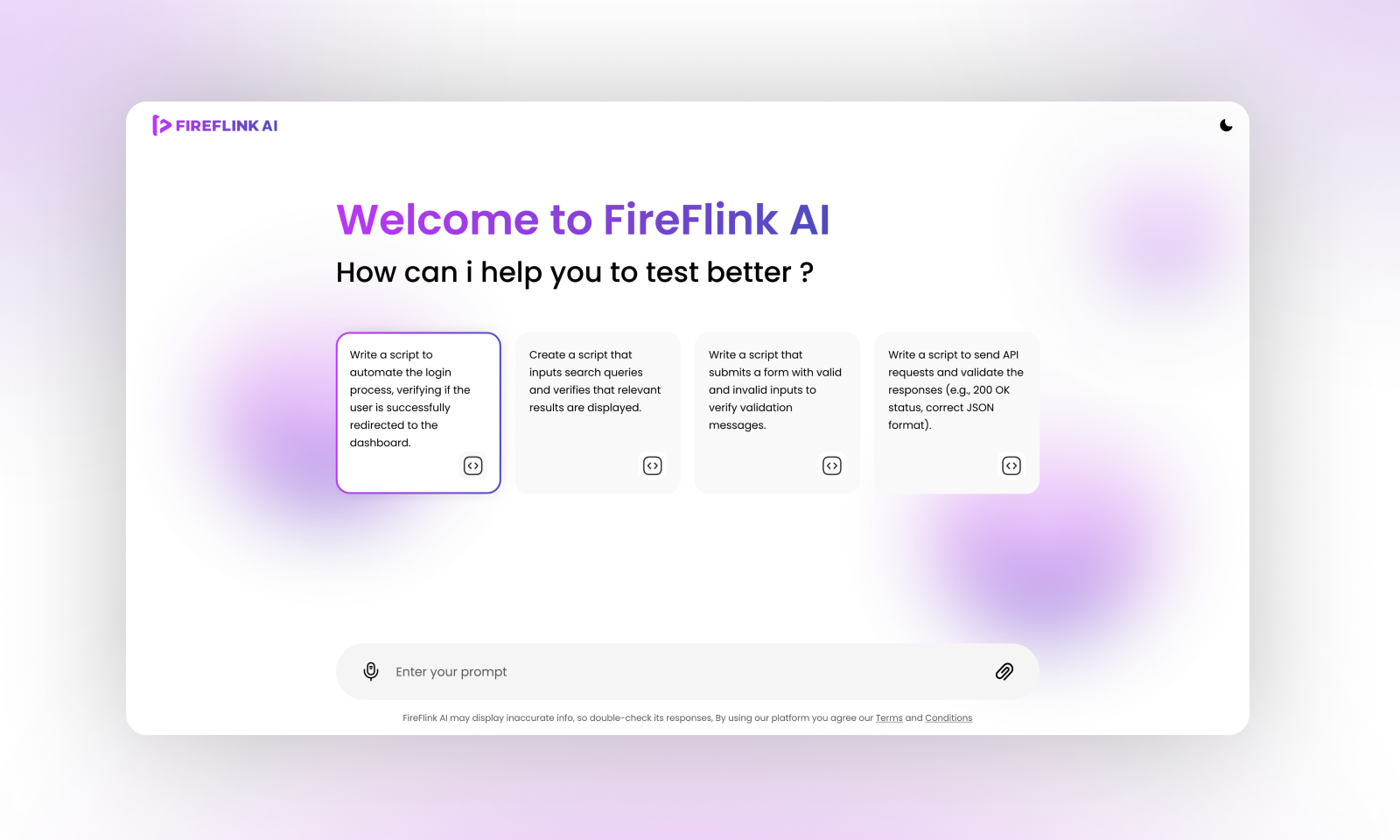

The chatbot acts as a co-pilot — sitting alongside the test editor, understanding intent, and generating structured output the QA engineer can immediately use or refine.

01

Intent Capture

User enters a high-level goal — e.g., "Test the login flow for a guest user using credentials."

02

Mapping & Retrieval

AI analyzes the prompt and queries the predefined NLP library to find the exact steps required.

03

Sequence Generation

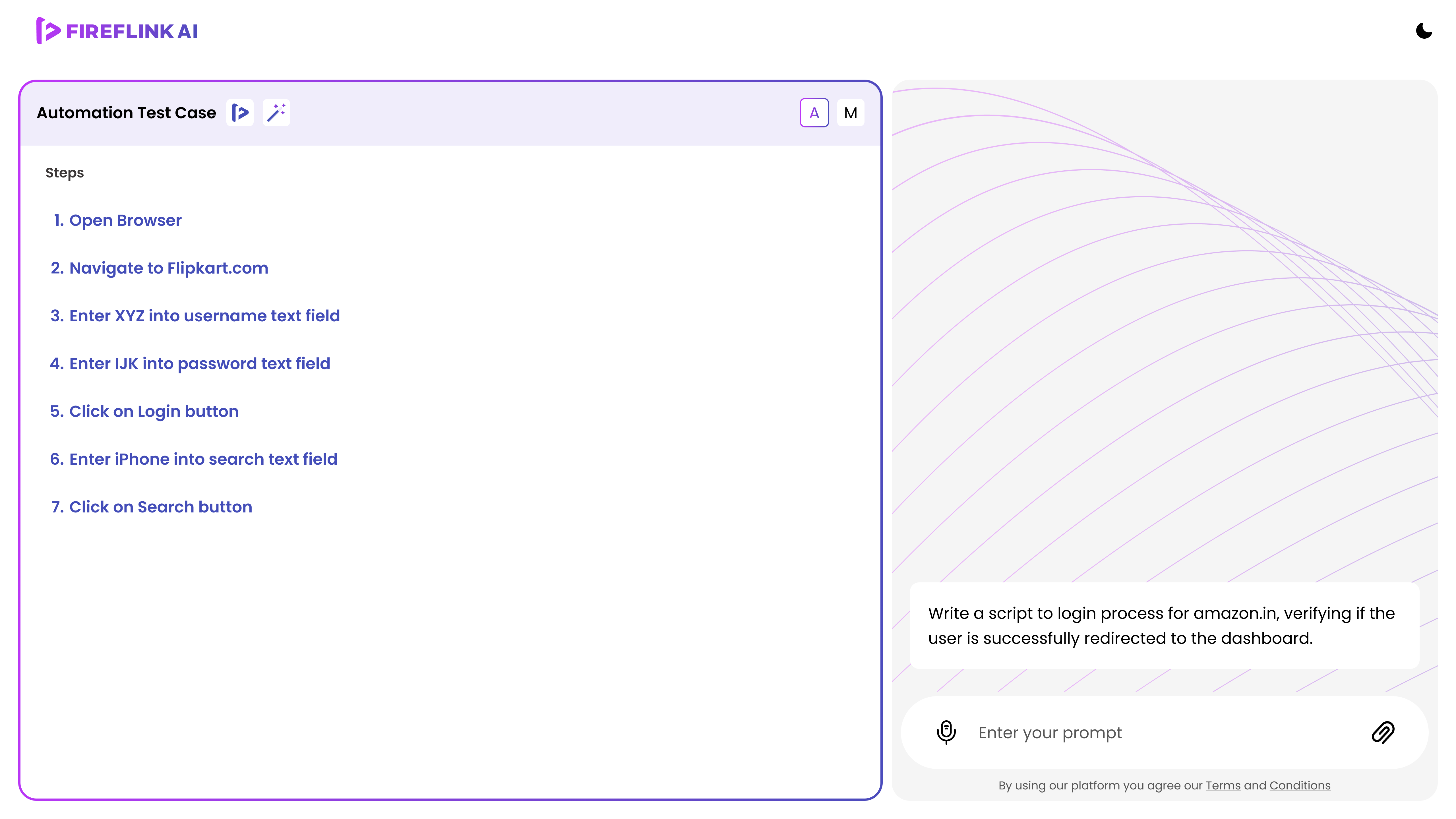

Instead of one step, the chatbot presents a complete list of test cases formatted for the FireFlink engine.

04

Refinement

User can tweak the list — e.g., "Add a step to verify the email" — before injecting it into the editor.

05

Design

From wireframes to high fidelity

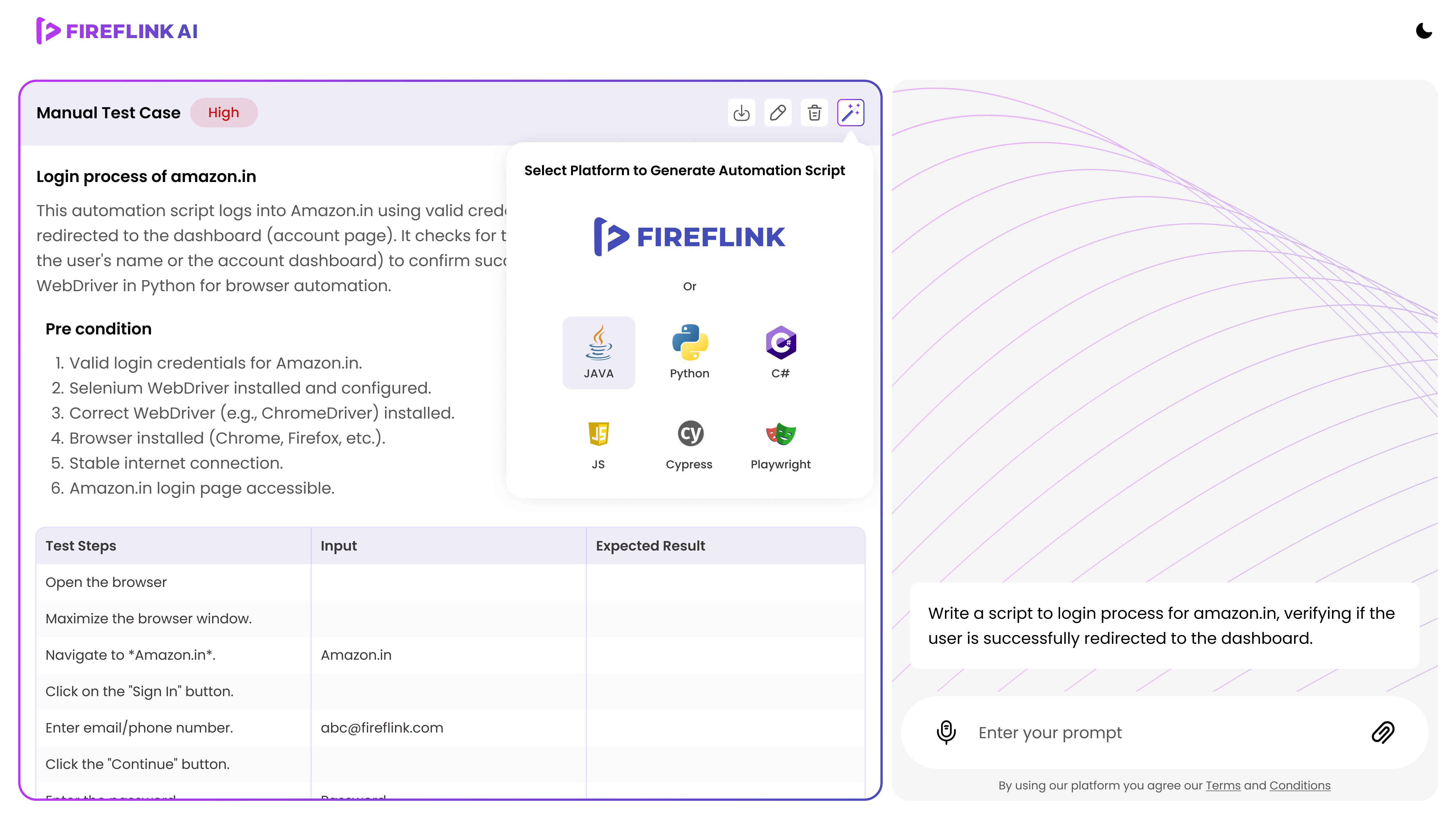

The design had to feel like a natural extension of the existing FireFlink editor — not a separate tool. Three key interface decisions shaped the final output.

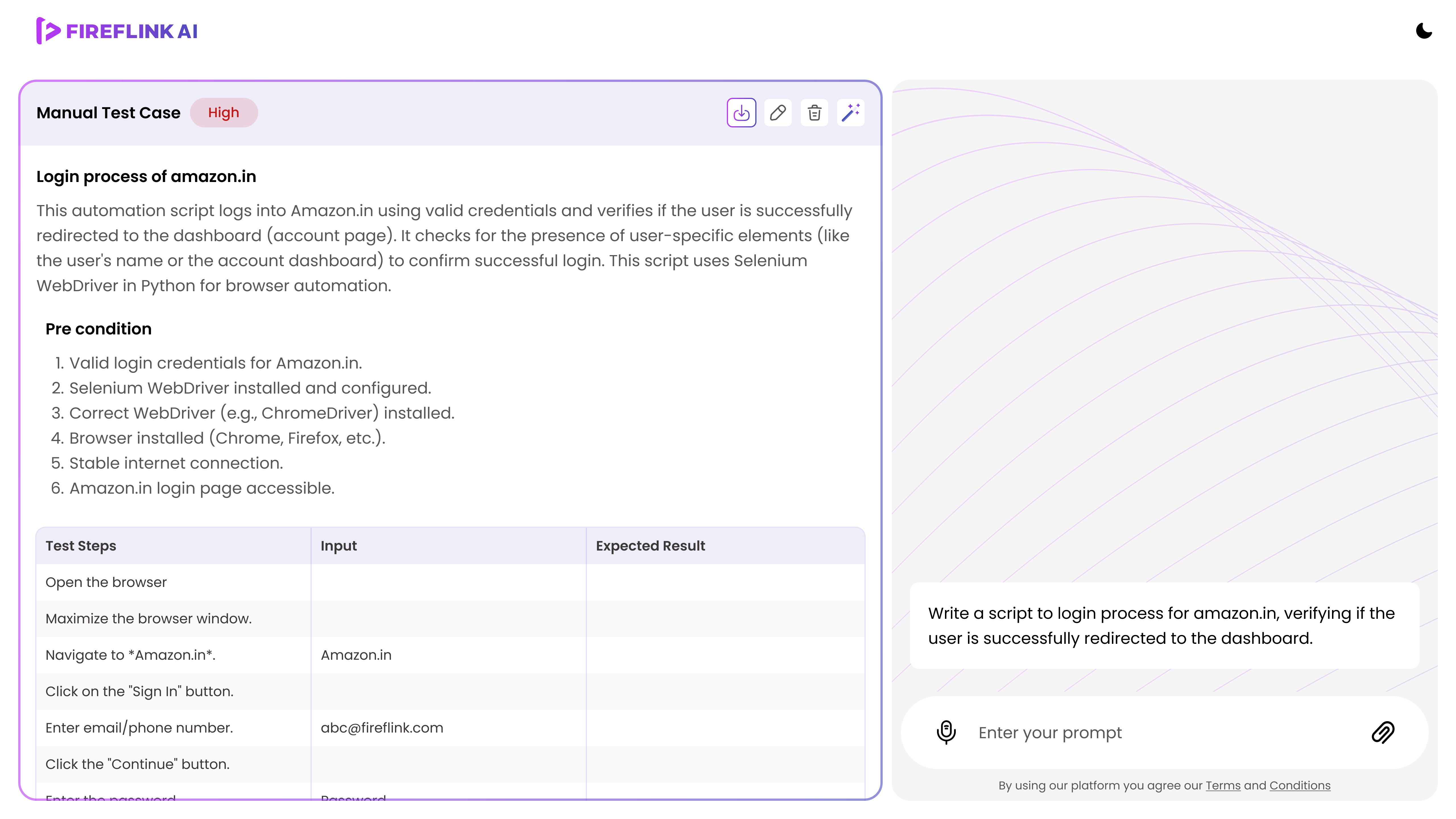

The Chatbot Interface

A docked side panel that maintains the context of the current test suite — always visible, never disruptive to the editor workflow.

Visual Feedback

Variables and locators within the chat response are visually highlighted — giving the user a window into the AI's reasoning and building trust in the generated output.

The "Add to Script" Action

A clear primary CTA bridges the gap between the chat output and the test editor — making it one action to move from AI suggestion to live script.

06

Outcome

What changed

By shifting the interaction to a generative chatbot model, the feature unlocked a new level of productivity for QA engineers — removing the search and assembly overhead entirely.

Zero search time

Users no longer browse the NLP library — the AI surfaces the right steps automatically

70% faster scripting

Complex end-to-end flows that took 20 minutes to assemble are now generated in seconds